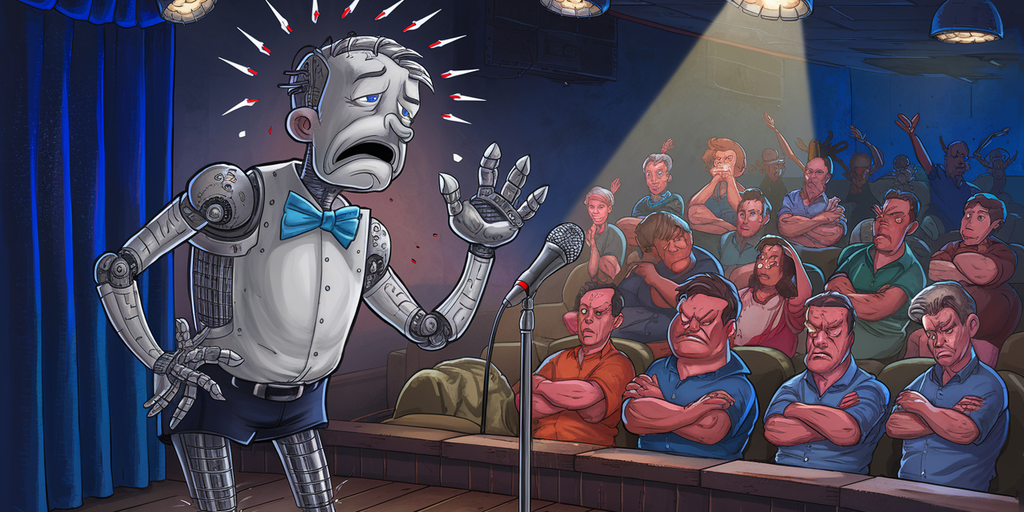

Comedy and humor are endlessly nuanced and subjective, but researchers at Google DeepMind found agreement among professional comedians: “AI is very bad at it.”

That was one of many comments collected during a study conducted with twenty professional comedians and performers during workshops at the Edinburgh Festival Fringe last August 2023 and online. The findings showed large language models (LLMs) accessed via chatbots presented significant challenges and raised ethical concerns about the use of AI in generating funny material.

The research involved a three-hour workshop in which comedians engaged in a comedy writing session with popular LLMs like ChatGPT and Bard. It also assessed the quality of output via a human-computer interaction questionnaire based on the decade-old Creativity Support Index (CSI), which measures how well a tool supports creativity.

The participants also discussed the motivations, processes, and ethical concerns of using AI in comedy in a focus group.

The researchers asked comedians to use AI to write standup comedy routines and then had them evaluate the results and share their thoughts. The results were… not good.

One of the participants described the AI-generated material as “the most bland, boring thing—I stopped reading it. It was so bad.” Another one referred to the output as “a vomit draft that I know that I’m gonna have to iterate on and improve.”

“And I don’t want to live in a world where it gets better,” another said.

The study found that LLMs were able to generate outlines and fragments of longer routines, but lacked the distinctly human elements that made something funny. When asked to generate the structure of a draft, the models “spat out a scene which provided a lot of structure,” but when it came to the details, “LLMs did not succeed as a creativity support tool.”

Among the reasons, the authors note, was the “global cultural value alignment of LLMs,” as the tools used in the study generated material based on all accumulated material, spanning every possible discipline. This also introduced a form of bias, which the comedians pointed out.

“Participants noted that existing moderation strategies used in safety filtering and instruction-tuned LLMs reinforced hegemonic viewpoints by erasing minority groups and their perspectives, and qualified this as a form of censorship,” the study said.

Popular LLMs are restricted, the researchers said, citing so-called “HHH criteria,” calling for honest, harmless, and helpful output—encapsulating what the “majority of what users want from an aligned AI.”

The material was described by one panelist as “cruise ship comedy material from the 1950s, but a bit less racist.”

“The broader appeal something has, the less great it could be,” another participant said. “If you make something that fits everybody, it probably will end up being nobody’s favorite thing.”

The researchers emphasized the importance of considering the subtle difference between harmful speech and offensive language used in resistance and satire. The comedians, meanwhile, also complained that the AI failed because it did not understand nuances like sarcasm, dark humor, or irony.

“A lot of my stuff can have dark bits in it, and then it wouldn’t write me any dark stuff, because it sort of thought I was going to commit suicide,” a participate reported. “So it just stopped giving me anything.”

The fact that the chatbots were based on written material didn’t help, the study found.

“Given that current widely available LLMs are primarily accessible through a text-based chat interface, they felt that the utility of these tools was limited to only a subset of the domains needed for producing a full comedic product,” the researchers noted.

“Any written text could be an okay text, but a great actor could probably make this very enjoyable,” a participant said.

The study revealed that AI’s limitations in comedy writing extend beyond simple content generation. The comedians stressed that perspective and point of view are uniquely human traits, with one comedian noting that humans “add much more nuance and emotion and subtlety” due to their lived experience and relationship to the material.

Many described the centrality of personal experience in good comedy, enabling them to draw upon memories, acquaintances, and beliefs to construct authentic and engaging narratives. Moreover, comedians stressed the importance of understanding cultural context and audience.

“The kind of comedy that I could do in India would be very different from the kind of comedy that I could do in the U.K., because my social context would change,” one of the participants said.

Thomas Winters, one of the researchers cited in the study, explains why this is a tough thing for AI to tackle.

“Humor’s frame-shifting prerequisite reveals its difficulty for a machine to acquire,” he said. “This substantial dependency on insight into human thought—memory recall, linguistic abilities for semantic integration, and world knowledge inferences—often made researchers conclude that humor is an AI-complete problem.”

Addressing the threat AI poses to human jobs, OpenAI CTO Mira Murati recently said that “some creative jobs maybe will go away, but maybe they shouldn’t have been there in the first place.” Given the current capabilities of the technology, however, it seems like comedians can breathe a sigh of relief.

Edited by Ryan Ozawa.

Daily Debrief Newsletter

Start every day with the top news stories right now, plus original features, a podcast, videos and more.

Source: https://decrypt.co/236599/ai-comedy-humor-study-censorship